Decision Table Testing: A black-box test design technique in which test cases are designed to execute the combinations of inputs and/or stimuli (causes) shown in a decision table.

Decision Table: A table showing combinations of inputs and/or stimuli (causes) with their associated outputs and/or actions (effects), which can be used to design test cases.This technique is using to test all possible combination of conditions, relationships, and constraints. Decision tables usually applied for the integration, system and acceptance test levels. Also, this technique could be used for component testing.

The table should describe relationships between conditions and actions.

Template of Decision Table:

Example:

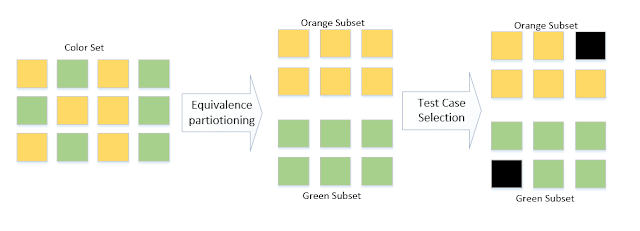

At least one test case should be created for each column. The number of tests will increase in case of boundaries values in conditions. Boundary Value Analysis and Equivalence Partitioning are additional to the Decision Table technique

Type of defects: functional and non-functional defects